Computational Photography

Final Project: Tiny Planets

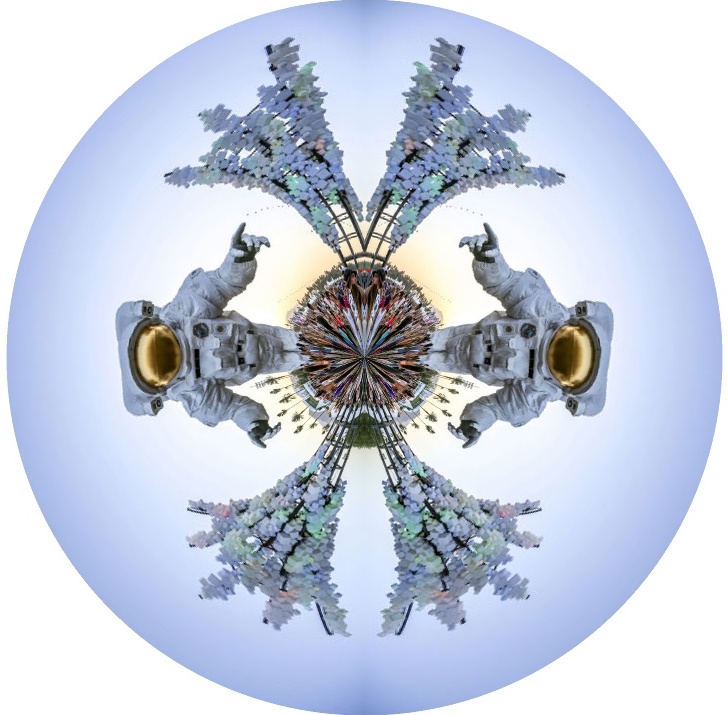

For a final project, I decided to implement a Tiny Planet computational photography pipeline.

This pipeline involves a few pre-processing steps to optionally mirror, flip, and/or square the input image.

An output image is prepared and inverse warping is used to compute what pixels to pull from the input image.

Conceptually, this technique is based on stereographic projection and is heavily dependent on trigonometry.

Ideally, I'd like to implement this pipeline in an iPhone app so I can take advantage of the onboard cameras and built in software.

Epsilon Photography

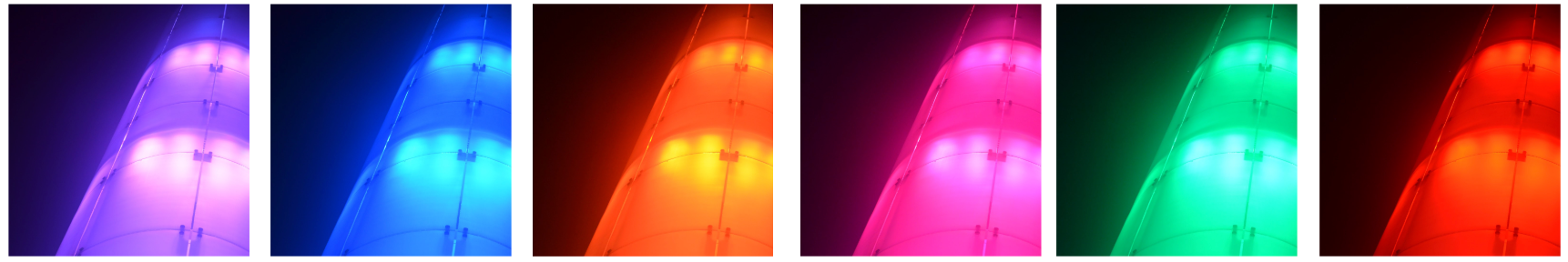

Epsilon photography is the process of capturing multiple images in sequence while slightly varying one parameter such as exposure, focus, or aperture.

In this project, I took time sequence photographs of Pylon #0 at the LAX Kinetic Light art installation at Los Angeles International Airport by mounting a flexible tripod on a nearby valve system and firing the shutter using a remote.

The final artifact is animate gif combining 40 images take from the base of the pylon taken manually over the course of about 15 minutes.

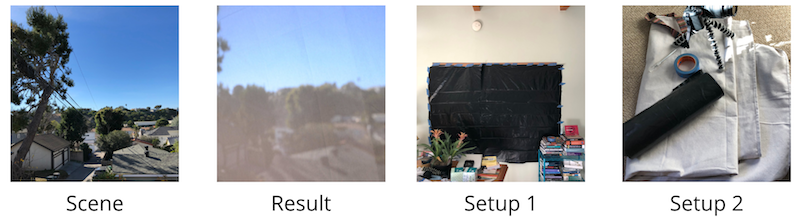

Camera Obscura

The camera obscura is the foundational concept for how modern cameras and even human eyes capture light and form images.

For this project, I recreated this technique in a studio apartment and captured a nearby sand dune scene.

As a reference, I visited a variety of local camera obscura installations around Los Angeles as well as an Abelardo Morell exhibit.

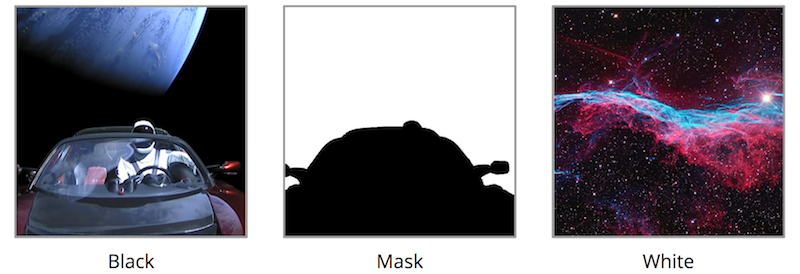

Blending

The code for this project is based on a concept called "image pyramids" which are used in a wide variety of applications such as computer vision (optical flow), image processing (blending), and even image compression.

After a tiny bit of research, I also found out it's used as the basis for mipmaps, a common technique used in games and simulations for texturing because it can speed up rendering and reduce artifacts such as aliasing. As an example, mipmaps are a feature of Unity3D and other game engines.

At its core, the technique creates two sets of image pyramids: Gaussians and Laplacians.

Gaussians are based on the original image and each level is weighted and subsampled version of the preceding layer, producing further pixelated versions at each level.

Laplacians are generated by subtracting Gaussians at adjacent levels, creating differential images aka difference of Gaussians.

The top level of the Laplacian pyramid is the same as the Gaussian pyramid, a very pixelated version of the original image.

By expanding and adding layer of the Laplacian, you can get back to the original image, hence the reason this technique is leveraged for image compression and mip maps.

Additionally, there is an added blend step in this pipeline which uses a grayscale mask to blend images at each level of the pyramid before expanding the result.

This creates a better final product as the blend is done at multiple layers and frequencies, rather than just the full resolution image.

For example, this technique is used in professional image processing software, such as Photoshop.

This technique is largely based on the commonly cited computer vision paper from the 1980s: Burt and Adelson, “A multiresolution spline with application to image mosaics”. In ACM Transactions on Graphics, 2 (4). 1983.

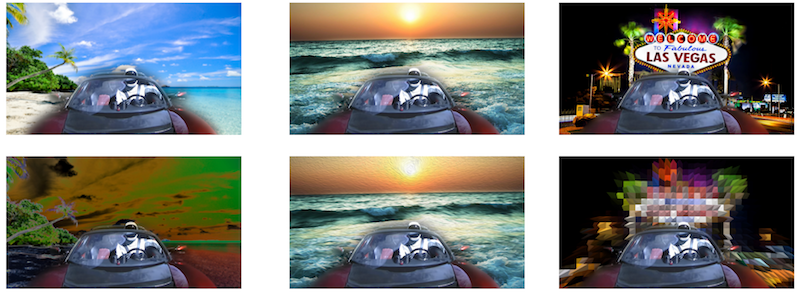

Panoramas

In this project, I used some of the built in tools in OpenCV to build a pipeline to detect features and compute homographies in order to warp images and create panoramas.

As an extension, I would like to implement Poisson blending and get a better handle on the built in image stiching pipeline.

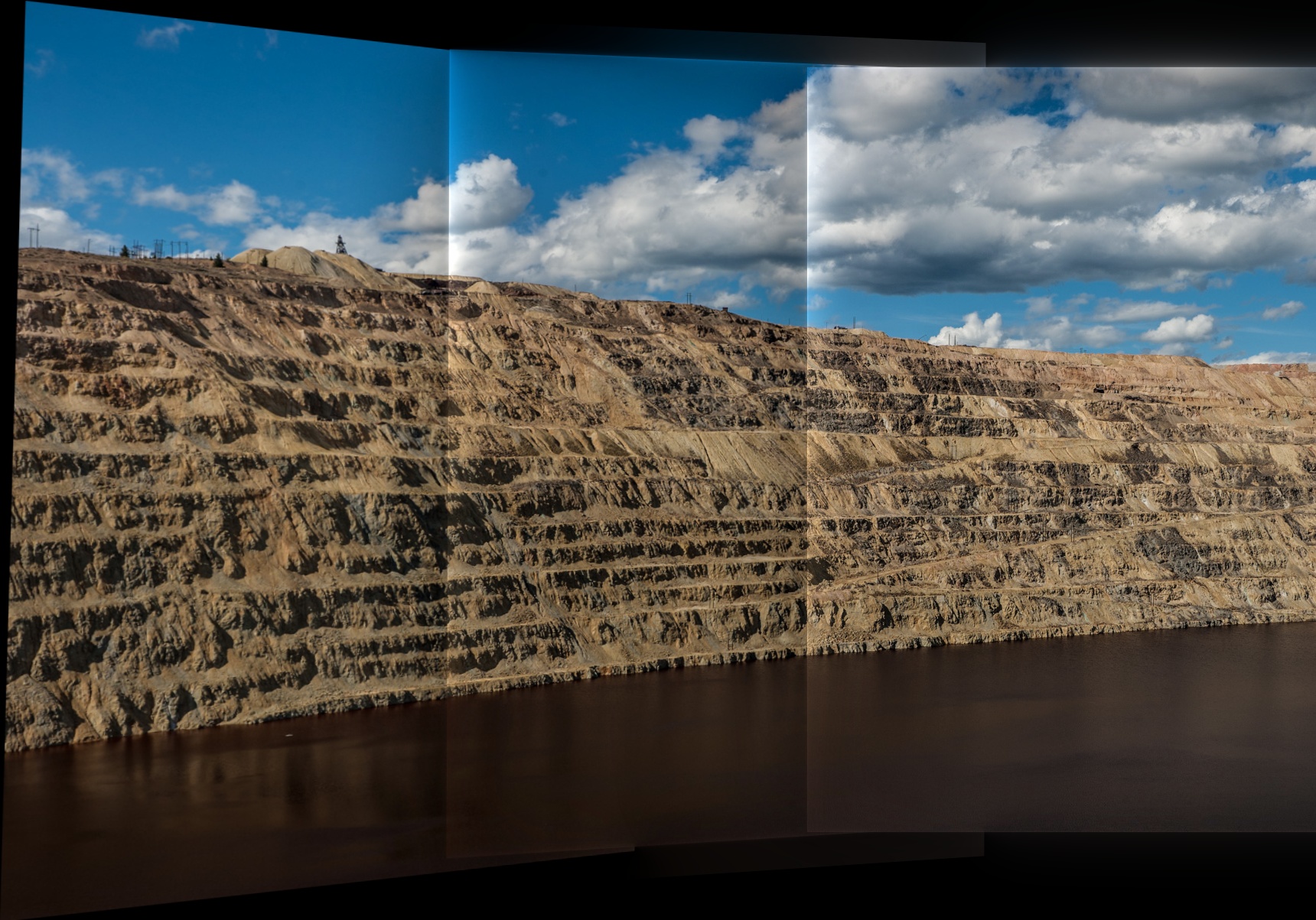

HDR

High dynamic range (HDR) images are an interesting way to capture more details in a scene than is typically possible by taking multiple images at different exposures and combining the results.

For this project, I implemented the radiance map technique discussed in "Recovering High Dynamic Range Radiance Maps from Photographs", by Debevec & Malik.

Midterm Project: Seam Carving

Seam Carving [Avidan 2007] is an image resizing technique which computes energy within a source image and presents a unique algorithm to minimize a path through the image, either horizontally or vertically, effectively converting this challenge into a minimization problem.

Drone Photography